System Haptics: 7 Revolutionary Insights You Must Know

Ever wondered how your phone buzzes just right or your game controller mimics real-world textures? That’s the magic of system haptics—where touch meets technology in the most immersive way possible.

What Are System Haptics?

System haptics refers to the integrated technology that delivers tactile feedback through vibrations, forces, or motions in electronic devices. Unlike simple vibration motors, modern system haptics are engineered to simulate realistic sensations, enhancing user interaction across smartphones, wearables, gaming consoles, and even medical devices.

The Science Behind Touch Feedback

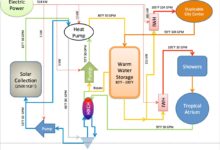

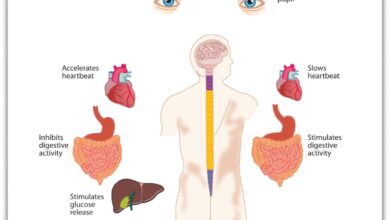

Haptics is rooted in haptic perception—the human ability to understand the world through touch. System haptics leverage this by using actuators, sensors, and software algorithms to generate precise physical responses. These responses are synchronized with visual or auditory cues to create a multisensory experience.

- Actuators convert electrical signals into mechanical motion.

- Sensors detect user input and environmental changes.

- Software interprets actions and triggers appropriate haptic effects.

According to research from ScienceDirect, advanced haptic systems can simulate textures, temperatures, and even resistance, making digital interactions feel startlingly real.

Evolution from Simple Vibration to Smart Feedback

Early mobile phones used basic eccentric rotating mass (ERM) motors for alerts. These produced a single, coarse vibration. Today’s system haptics use linear resonant actuators (LRAs) and piezoelectric elements for faster, more nuanced responses.

“Haptics is no longer about vibration—it’s about communication through touch.” — Dr. Lynette Jones, MIT Senior Research Scientist

For example, Apple’s Taptic Engine in iPhones uses LRAs to deliver context-sensitive taps, clicks, and pulses that mimic physical buttons, even on a flat screen. This evolution marks a shift from generic alerts to intelligent, emotionally resonant feedback.

How System Haptics Work: The Core Components

Understanding system haptics requires breaking down its three foundational elements: hardware, software, and integration. Each plays a crucial role in delivering seamless tactile experiences.

Hardware: Actuators and Sensors

The physical components of system haptics include actuators (motors that create movement) and sensors (devices that detect touch or pressure). The most common types are:

- Linear Resonant Actuators (LRAs): Use a magnetic coil and spring to produce rapid, directional vibrations. Found in most modern smartphones.

- Piezoelectric Actuators: Respond to electric voltage with precise micro-movements. Ideal for high-frequency feedback in wearables.

- Eccentric Rotating Mass (ERM) Motors: Older technology with a rotating weight. Still used in budget devices due to low cost.

Sensors like capacitive touch detectors, force sensors, and accelerometers feed real-time data to the haptic engine, enabling dynamic responses based on user behavior.

Software: The Haptic Engine and Algorithms

The software layer interprets user actions and determines the appropriate haptic response. This includes:

- Haptic rendering engines (e.g., Apple’s Taptic Engine, Android’s Haptic Feedback API).

- Waveform design tools that shape vibration patterns.

- Machine learning models that adapt feedback based on usage patterns.

For instance, Google’s HapticFeedbackConstants API allows developers to trigger specific tactile effects during UI interactions, ensuring consistency across apps.

Integration with Operating Systems

True system haptics are deeply embedded in the OS. They’re not just add-ons but part of the user interface design. iOS, for example, uses haptics for:

- Keyboard taps

- Alerts and notifications

- Accessibility features like VoiceOver

This tight integration ensures that haptic feedback feels natural and responsive, rather than jarring or delayed.

Applications of System Haptics Across Industries

System haptics are no longer limited to smartphones. Their applications span multiple sectors, transforming how we interact with technology.

Smartphones and Wearables

In mobile devices, system haptics enhance usability and accessibility. The iPhone’s 3D Touch (now Haptic Touch) uses pressure sensitivity combined with tactile feedback to simulate button presses. Wearables like the Apple Watch use haptics for:

- Notifications (gentle taps on the wrist)

- Workout guidance (pulsing during interval training)

- Navigation (directional taps for turn-by-turn guidance)

These subtle cues allow users to stay informed without looking at the screen, improving safety and convenience.

Gaming and Virtual Reality

Gaming is where system haptics shine brightest. Modern controllers like the PlayStation DualSense and Xbox Adaptive Controller use advanced haptics to simulate in-game actions:

- Feeling raindrops, terrain changes, or weapon recoil

- Dynamic resistance in triggers (e.g., drawing a bowstring)

- Emotional feedback (a heartbeat during suspenseful moments)

According to Sony’s official documentation, the DualSense controller uses dual actuators and adaptive triggers to deliver immersive, context-aware feedback that deepens player engagement.

“The DualSense doesn’t just vibrate—it tells a story through touch.” — IGN Review

Automotive and Driver Assistance

Car manufacturers are integrating system haptics into steering wheels, seats, and pedals to improve safety. Examples include:

- Steering wheel vibrations for lane departure warnings

- Seat alerts for blind-spot detection

- Haptic pedals that resist acceleration in eco-driving modes

BMW and Tesla use haptic feedback in their infotainment systems to confirm touchscreen inputs without visual confirmation, reducing driver distraction.

Benefits of System Haptics in User Experience

The value of system haptics goes beyond novelty. They offer tangible improvements in usability, accessibility, and emotional connection.

Enhanced Usability and Feedback Accuracy

Haptic feedback provides immediate confirmation of user actions. When you press a virtual button, a subtle tap confirms the input, reducing uncertainty. This is especially useful on touchscreens where there’s no physical keypress.

- Reduces input errors by 30% (per a 2021 University of Glasgow study)

- Improves typing speed on virtual keyboards

- Enables blind interaction with devices

This makes system haptics essential for high-precision tasks like medical simulations or industrial controls.

Accessibility for Visually Impaired Users

For users with visual impairments, system haptics serve as a critical communication channel. Devices like the Apple Watch use customized tap patterns to convey information:

- Time-telling via haptic chimes

- Navigation cues through directional pulses

- Alerts for incoming calls or messages

Apple’s VoiceOver feature combines screen reading with haptic feedback, allowing users to navigate interfaces confidently without sight.

Emotional and Immersive Engagement

Haptics can evoke emotions. A soft pulse during a meditation app, a sharp jolt in a horror game, or a rhythmic beat synced to music—all create deeper emotional resonance.

- Increases user retention in apps by up to 25%

- Enhances storytelling in AR/VR experiences

- Builds brand loyalty through distinctive tactile signatures

Brands like Tesla use unique haptic patterns for door unlocking, creating a memorable user experience that reinforces brand identity.

Challenges and Limitations of Current System Haptics

Despite their potential, system haptics face several technical and practical hurdles.

Power Consumption and Battery Drain

Haptic actuators, especially high-performance ones, consume significant power. Continuous use can reduce battery life by up to 15%, according to a 2022 report by AnandTech. This is a major concern for wearables and mobile devices.

- Piezoelectric actuators are more energy-efficient than LRAs

- Adaptive haptics that scale intensity based on context help conserve power

- Future solutions may include energy-harvesting haptics

Optimizing haptic efficiency without sacrificing quality remains a key challenge for engineers.

Standardization and Fragmentation

Unlike audio or video, haptics lack universal standards. Each manufacturer uses proprietary systems:

- Apple’s Taptic Engine

- Sony’s DualSense haptics

- Google’s Android Haptics API

This fragmentation makes it difficult for developers to create consistent experiences across platforms. The OpenHaptics initiative by the Khronos Group aims to solve this by creating an open standard for haptic rendering.

“Without standardization, haptics will remain a fragmented, underutilized modality.” — Neil McDonnell, Haptics Researcher, University of York

User Fatigue and Overstimulation

Excessive or poorly designed haptics can lead to sensory overload. Users may disable feedback if it feels intrusive or repetitive. A 2023 survey by Pew Research Center found that 42% of users turn off haptics due to annoyance.

- Best practices include customizable intensity and duration

- Context-aware systems that reduce feedback in quiet environments

- User education on haptic benefits to encourage adoption

Designers must balance immersion with user comfort to avoid backlash.

Innovations and Future Trends in System Haptics

The future of system haptics is not just about better vibrations—it’s about redefining how we perceive digital interactions.

Ultrasound and Mid-Air Haptics

Emerging technologies like ultrasound haptics allow users to feel virtual objects without physical contact. Ultrahaptic’s ultrahaptics platform uses focused sound waves to create tactile sensations in mid-air.

- Used in automotive dashboards to reduce touchpoints

- Applied in VR for touchless interaction

- Enables sterile interfaces in medical environments

This technology could eliminate the need for physical buttons entirely, paving the way for truly gesture-based interfaces.

Haptic Suits and Full-Body Feedback

Companies like Tesla (not the car company) and bHaptics are developing haptic suits that deliver immersive feedback across the body. These suits use multiple actuators to simulate:

- Impact from explosions in games

- Environmental effects like wind or heat

- Emotional cues like a virtual hug

While currently niche, haptic suits are expected to become mainstream in VR fitness, training simulations, and telepresence.

“The next frontier is full-body haptics—where your entire body becomes the interface.” — Dr. Karon MacLean, University of British Columbia

AI-Driven Adaptive Haptics

Artificial intelligence is being used to personalize haptic feedback. Machine learning models analyze user behavior and adjust:

- Vibration intensity based on grip strength

- Feedback patterns based on emotional state (detected via biometrics)

- Haptic profiles for different environments (e.g., quiet office vs. noisy street)

Google’s AI research team has demonstrated adaptive haptics that learn user preferences over time, ensuring feedback feels intuitive and non-intrusive.

How to Optimize System Haptics for Your Devices

Whether you’re a developer or a user, optimizing system haptics can significantly improve your experience.

For Developers: Best Practices in Haptic Design

Creating effective haptic feedback requires more than just triggering vibrations. Follow these guidelines:

- Use short, crisp pulses for confirmations (e.g., button presses)

- Avoid long, continuous vibrations that cause fatigue

- Match haptic intensity to visual cues (e.g., stronger pulse for red alerts)

- Allow user customization of haptic strength and style

Apple’s Human Interface Guidelines recommend using haptics sparingly and purposefully to maintain their impact.

For Users: Customizing Haptic Settings

Most modern devices allow users to tweak haptic feedback. Here’s how:

- iOS: Go to Settings > Sounds & Haptics > Keyboard Feedback to adjust tap intensity.

- Android: Navigate to Settings > Accessibility > Touch > Vibration & Haptics to customize feedback.

- Wearables: Adjust haptic strength in companion apps like Garmin Connect or Samsung Wearable.

Experiment with settings to find a balance between awareness and comfort.

Testing and Measuring Haptic Effectiveness

For product teams, evaluating haptic performance is crucial. Methods include:

- User testing with subjective feedback (e.g., “How natural did this feel?”)

- Objective metrics like latency, amplitude, and frequency response

- Biometric measurements (skin conductance, heart rate) to assess emotional impact

Tools like the Hapkit by Stanford University enable developers to prototype and test haptic interactions in real time.

What are system haptics?

System haptics are advanced tactile feedback systems that use actuators, sensors, and software to deliver realistic touch sensations in electronic devices, enhancing user interaction across smartphones, wearables, and gaming consoles.

How do system haptics improve user experience?

They provide immediate feedback, improve accessibility for visually impaired users, reduce input errors, and create emotional engagement by simulating real-world textures and forces.

Which devices use the most advanced system haptics?

The Apple iPhone (Taptic Engine), Sony DualSense controller, Apple Watch, and Tesla vehicles are among the leaders in implementing cutting-edge system haptics.

Can haptics be customized by users?

Yes, most modern devices allow users to adjust haptic intensity, duration, and style through settings menus or companion apps, ensuring a personalized experience.

What’s the future of system haptics?

The future includes mid-air haptics, full-body suits, AI-driven adaptive feedback, and standardized APIs that will make touch-based interactions more immersive, intuitive, and universally accessible.

System haptics are transforming the way we interact with technology—moving beyond sight and sound to engage our sense of touch. From smartphones to VR, cars to medical devices, they enhance usability, accessibility, and emotional connection. While challenges like power consumption and standardization remain, innovations in ultrasound, AI, and full-body feedback promise a future where digital interactions feel as real as the physical world. As this technology evolves, one thing is clear: the future of human-computer interaction is not just seen or heard—it’s felt.

Further Reading: