System Failure: 7 Shocking Causes and How to Prevent Them

Ever wondered what happens when everything suddenly stops working? From power grids to software networks, system failure can strike anywhere, anytime—often with devastating consequences. Let’s dive into the hidden mechanics behind these breakdowns and how we can stop them before they happen.

What Is a System Failure?

A system failure occurs when a network, machine, or process stops functioning as intended, leading to operational disruption, data loss, or even safety hazards. These failures aren’t limited to technology—they span across engineering, biology, economics, and social infrastructures. Understanding what constitutes a system failure is the first step toward preventing it.

Defining System and Subsystem Boundaries

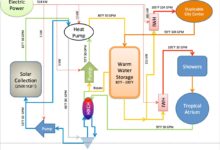

Every system, whether mechanical, digital, or organizational, consists of interconnected components that rely on each other to function. A subsystem might be a server in a data center, a valve in a pipeline, or a department in a company. When one subsystem fails, it can trigger a cascade effect across the entire system.

For example, in a 2021 incident, a single misconfigured server at Facebook caused a global outage lasting over six hours because the Domain Name System (DNS) failed to propagate correctly. This shows how tightly coupled modern systems are—where one weak link can bring down an empire. Learn more about this event on Meta’s Engineering Blog.

- Systems are defined by inputs, processes, outputs, and feedback loops.

- Subsystems operate within larger frameworks and often have dependencies.

- Failure in one area can propagate due to poor isolation or redundancy.

Types of System Failures

Not all system failures are created equal. They vary based on origin, impact, and duration. Common types include:

- Hardware Failure: Physical components like hard drives, processors, or sensors stop working.

- Software Failure: Bugs, memory leaks, or unhandled exceptions crash applications.

- Network Failure: Connectivity loss disrupts communication between nodes.

- Human Error: Mistakes in configuration, deployment, or maintenance cause unintended outages.

- Environmental Failure: Natural disasters, power surges, or temperature extremes damage infrastructure.

“A system is only as strong as its weakest component.” — Unknown Engineer

Common Causes of System Failure

Behind every major system failure lies a chain of events, often starting with something small. Identifying the root causes helps organizations build resilience and avoid repeating past mistakes.

Poor Design and Architecture

One of the most insidious causes of system failure is flawed design. Systems built without scalability, fault tolerance, or proper error handling are ticking time bombs. For instance, monolithic architectures that lack microservices isolation are prone to cascading failures.

Consider the 2012 Knight Capital Group incident, where a software deployment flaw caused $440 million in losses in just 45 minutes. The root cause was outdated code being accidentally activated during an update. This disaster could have been avoided with better architectural safeguards like canary deployments and rollback mechanisms.

- Lack of modularity increases risk of widespread failure.

- Inadequate load testing leads to collapse under real-world stress.

- Poor API design creates bottlenecks and integration issues.

Insufficient Redundancy and Failover Mechanisms

Redundancy ensures that if one component fails, another takes over seamlessly. Yet, many systems operate with single points of failure (SPOFs), making them vulnerable.

The 2003 Northeast Blackout, which affected 55 million people across the U.S. and Canada, was caused by a failed alarm system in a utility company’s control room. With no backup monitoring, operators were unaware of rising transmission line overloads until it was too late. The U.S. Department of Energy’s report on the blackout highlights how missing redundancy played a critical role. Read the full analysis at DOE Blackout Report.

- Redundant power supplies prevent downtime during outages.

- Data replication across zones protects against storage loss.

- Automatic failover systems reduce recovery time significantly.

Case Studies of Major System Failures

History is littered with high-profile system failures that offer valuable lessons. By studying these real-world examples, we can identify patterns and improve future designs.

The Challenger Space Shuttle Disaster (1986)

One of the most tragic examples of system failure occurred on January 28, 1986, when the Space Shuttle Challenger exploded 73 seconds after launch, killing all seven crew members. The immediate cause was the failure of an O-ring seal in one of the solid rocket boosters, which had become brittle in cold weather.

However, the deeper issue was organizational. Engineers at Morton Thiokol had warned NASA about launching in low temperatures, but their concerns were overridden due to schedule pressure. This case illustrates how both technical flaws and management failures contribute to system collapse.

- Technical flaw: O-ring material lost elasticity below 50°F.

- Organizational flaw: Decision-making ignored expert warnings.

- Communication breakdown between engineering and leadership.

“For a successful technology, reality must take precedence over public relations, for nature cannot be fooled.” — Richard P. Feynman

Amazon Web Services Outage (2017)

In February 2017, a routine debugging command went wrong in Amazon’s S3 storage service, causing widespread disruption across thousands of websites and apps that relied on AWS. The command was meant to remove a small number of servers but accidentally took a large set offline.

This incident exposed how even the most robust cloud platforms are vulnerable to human error. It also highlighted the dependency modern digital services have on centralized infrastructure. The outage lasted nearly five hours and impacted companies like Slack, Trello, and Airbnb.

- Mistyped command led to unintended server shutdown.

- Lack of safeguards around administrative tools.

- Global impact due to concentration of services in one region.

Amazon later published a post-mortem detailing the incident and outlining new safeguards. You can read it at AWS S3 Outage Post-Mortem.

How System Failure Impacts Different Industries

The consequences of system failure vary widely depending on the industry. In some cases, it means inconvenience; in others, it can cost lives or destabilize economies.

Healthcare: When Lives Depend on Systems

In healthcare, system failure can be a matter of life and death. Electronic health records (EHR), medical imaging systems, and patient monitoring devices must operate reliably.

In 2020, a ransomware attack on Universal Health Services (UHS) forced over 400 facilities to revert to paper records. Emergency rooms were overwhelmed, surgeries delayed, and patient safety compromised. The attack exploited vulnerabilities in network security—a classic case of system failure with dire human consequences.

- Downtime in EHR systems delays diagnosis and treatment.

- Medical device malfunctions can lead to incorrect dosages or false readings.

- Cyberattacks on hospitals are rising, with average ransom demands exceeding $1 million.

Finance: The Cost of Downtime

Financial institutions rely on real-time transaction processing, fraud detection, and trading algorithms. Even a few minutes of system failure can result in massive financial losses.

In 2012, the Royal Bank of Scotland (RBS) suffered a system failure due to a failed software update, leaving millions of customers unable to access their accounts for over a week. Payments were delayed, direct debits failed, and the bank faced regulatory fines and reputational damage.

- Transaction processing delays erode customer trust.

- Regulatory compliance is jeopardized during outages.

- Algorithmic trading systems can go haywire during network latency spikes.

The UK Financial Conduct Authority (FCA) later criticized RBS for its lack of testing and recovery planning. More details are available at FCA Report on RBS Incident.

Preventing System Failure: Best Practices

While no system is immune to failure, proactive measures can drastically reduce the likelihood and impact of system failure. Prevention starts with culture, extends to design, and is maintained through continuous monitoring.

Implementing Robust Testing Protocols

Testing is the frontline defense against system failure. Organizations must go beyond basic unit tests to include integration, stress, chaos, and regression testing.

Netflix pioneered chaos engineering with its tool Chaos Monkey, which randomly disables production instances to test system resilience. This proactive approach ensures that failures are expected and handled gracefully, rather than caught off guard.

- Unit tests verify individual components.

- Integration tests ensure subsystems work together.

- Chaos engineering simulates real-world failures to improve robustness.

Adopting DevOps and CI/CD Pipelines

DevOps practices bridge the gap between development and operations, enabling faster, safer deployments. Continuous Integration and Continuous Deployment (CI/CD) pipelines automate testing and rollout, reducing human error.

By using automated rollback features, teams can quickly revert to stable versions if a new release causes system failure. Google, for example, deploys code thousands of times per day using CI/CD, yet maintains high uptime through rigorous automation and monitoring.

- Automated builds catch errors early.

- Staged rollouts (canary releases) limit blast radius.

- Monitoring tools detect anomalies in real time.

The Role of Human Factors in System Failure

Despite advances in automation, humans remain central to system design, operation, and recovery. Human error is a leading cause of system failure, but so is poor organizational culture.

Cognitive Biases and Decision-Making Under Stress

During crises, people often fall prey to cognitive biases like confirmation bias (favoring information that confirms existing beliefs) or normalcy bias (believing things will function as they normally do).

In the Three Mile Island nuclear accident (1979), operators misread instrument data and shut down emergency cooling water, worsening the partial meltdown. Their training hadn’t prepared them for ambiguous signals, and stress impaired their judgment.

- Training should include scenario-based simulations.

- Decision support systems can reduce cognitive load.

- Clear communication protocols prevent misinterpretation.

Organizational Culture and Psychological Safety

A culture that discourages dissent or punishes mistakes creates an environment where problems go unreported until it’s too late. Psychological safety—the belief that one won’t be punished for speaking up—is essential for early detection of system risks.

Google’s Project Aristotle found that psychological safety was the top factor in team effectiveness. In high-reliability organizations like aviation and nuclear power, crew resource management (CRM) training emphasizes open communication and shared responsibility.

- Leaders should encourage questioning and feedback.

- Blame-free post-mortems foster learning.

- Whistleblower protections ensure critical issues are reported.

Recovering from System Failure: Incident Response and Resilience

When system failure occurs, the focus shifts from prevention to recovery. A well-prepared incident response plan can minimize damage and accelerate restoration.

Building an Effective Incident Response Team

An incident response team (IRT) is responsible for managing system failures, from detection to resolution. Members typically include IT staff, security experts, communications officers, and executives.

Key responsibilities include:

- Identifying the scope and impact of the failure.

- Isolating affected components to prevent spread.

- Communicating updates to stakeholders and the public.

- Coordinating recovery efforts and documenting the event.

The National Institute of Standards and Technology (NIST) provides a comprehensive guide on computer security incident handling. Access it at NIST Incident Response Guide.

Post-Mortem Analysis and Continuous Improvement

After a system failure is resolved, a post-mortem analysis should be conducted. This isn’t about assigning blame, but about understanding what went wrong and how to prevent recurrence.

Effective post-mortems include:

- A timeline of events leading to the failure.

- Root cause analysis using techniques like the 5 Whys or Fishbone diagrams.

- Actionable recommendations for process or technical improvements.

- Follow-up tracking to ensure changes are implemented.

“Failures are finger posts on the road to achievement.” — C.S. Lewis

Emerging Technologies and the Future of System Reliability

As technology evolves, so do the risks and solutions for system failure. Artificial intelligence, quantum computing, and decentralized networks are reshaping how we design and maintain systems.

AI and Predictive Maintenance

Artificial intelligence is revolutionizing system reliability through predictive maintenance. By analyzing sensor data and usage patterns, AI models can forecast hardware failures before they occur.

For example, General Electric uses AI to monitor jet engines in real time, predicting component wear and scheduling maintenance proactively. This reduces unplanned downtime and extends equipment life.

- Machine learning detects anomalies in system behavior.

- Predictive models reduce reliance on scheduled maintenance.

- AI-powered alerts enable faster response times.

Blockchain and Decentralized Systems

Blockchain technology offers a new paradigm for system resilience by eliminating central points of failure. In a decentralized network, data is replicated across multiple nodes, making it harder for a single event to bring down the entire system.

Cryptocurrencies like Bitcoin have operated for over a decade without a major system failure, despite constant attacks. This resilience comes from cryptographic security and distributed consensus mechanisms like Proof of Work.

- No single point of control increases resistance to attacks.

- Data immutability prevents tampering.

- Smart contracts automate processes without intermediaries.

However, blockchain is not immune to failure—smart contract bugs and 51% attacks remain risks. The 2016 DAO hack, where $50 million was stolen due to a code vulnerability, serves as a cautionary tale. Learn more at CoinDesk: The DAO Hack.

What is the most common cause of system failure?

The most common cause of system failure is human error, particularly in system configuration, software deployment, and maintenance procedures. Studies show that over 50% of IT outages are linked to changes made by administrators, often due to lack of testing or oversight.

How can organizations prepare for system failure?

Organizations should implement redundancy, conduct regular disaster recovery drills, adopt DevOps practices, and foster a culture of transparency and continuous learning. Having a documented incident response plan and performing post-mortem analyses are also critical.

Can AI prevent system failure?

Yes, AI can significantly reduce system failure by enabling predictive maintenance, anomaly detection, and automated response. However, AI systems themselves can fail if not properly trained or monitored, so they should be part of a broader reliability strategy.

What is chaos engineering?

Chaos engineering is the practice of intentionally introducing failures into a system to test its resilience. Tools like Netflix’s Chaos Monkey randomly disable servers or inject latency to ensure the system can handle real-world disruptions without collapsing.

Why is psychological safety important in preventing system failure?

Psychological safety allows employees to report potential issues without fear of retribution. In high-stakes environments, early warnings from frontline staff can prevent small problems from escalating into major system failures.

System failure is an inevitable risk in any complex system, but it doesn’t have to be catastrophic. By understanding its causes—from poor design to human error—and implementing robust prevention and recovery strategies, organizations can build resilience. Technologies like AI and blockchain offer new tools for reliability, but culture and process remain foundational. The goal isn’t to eliminate failure entirely—that’s impossible—but to create systems that fail safely, recover quickly, and learn continuously.

Further Reading: